So what is the best way to monitor your athletes – subjective self-reporting, or the cold hard facts of objective measurements? This is the ongoing debate in athlete monitoring circles today.

Many performance departments are divided on the relative merits of self-reported subjective measures, versus the objective measures of time, distance and quantities offered by physical measurements.

A recent systematic review of the literature by Anna Saw and Colleagues at Deakin University in Australia set out to answer this eternal question. They reviewed a wide range of research articles looking at a wide range of subjective and objective athlete monitoring methods, in particular focusing on:

• Consistency – how reliable and repeatable were the measures?

• Sensitivity – how able were the measures to show change, even when the changes were subtle? Was this different for acute (short term) and chronic (long term) changes?

• How do subjective vs objective measures relate to each other in a typical “mixed methods” approach?

The Research

A wide variety of literature was reviewed in a very systematic manner, taking into account:

• Population: only studies using people engaged actively in routine training.

• Methods: studies had to employ both a valid and reliable subjective method, and at least one objective measure of wellbeing.

• Timing: the subjective and objective measures had to be taken at the same time, taken regularly and not in a competition setting.

After starting with over 4000 potential articles, the short list was 54 high quality research papers that went into the analysis.

The most common subject measures of athlete wellbeing were:

• Profile of Mood States (POMS) – including derivatives of the POMS

• Recovery Stress Questionnaire for Athletes (RESTQ-S)

• Daily Analyses of Life Demands of Athletes (DALDA)

Other measures used included:

• The Overtraining Questionnaire of the Societe Francaise de Medicine du Sport (SFMS)

• State-Trait Anxiety Inventory (STAI)

• Stress Scale (PSS)

• Multi-Component Training Distress Scale (MTDS)

• Competitive State Anxiety Inventory-2 (CSAI-2)

• Derogatis Symptom Checklist (DSC)

• State-Trait Personality Inventory (STPI)

The range of objective measures included data such as:

• Blood measurements of stress and muscle damage (cortisol, creatine kinase, T:C ratio, growth hormone and more)

• Urinary measures of muscle damage (creatinine, urea and others)

• Physiological response measures during exercise (submaximal and maximal lactate, oxygen consumption and heart rates)

• Performance in short and sustained endurance tasks

The various studies also looked at which measures tended to be more sensitive and show changes during acute increases or decreases in training load, or with chronic ongoing training loads.

The Findings

The review uncovered a range of interesting findings and I would encourage you to read the full article. However the key findings were as follows:

Finding #1: The relationships between objective and subjective measures were generally poor.

Despite some moderate evidence for relationships in a few examples, in most cases the subjective and objective measures failed to agree. The explanation for this is that objective measures such as blood markers or changes in exercise response may take time to manifest themselves, and therefore show different timing to the immediate self-reported measures.

Finding #2: Subjective measures were more sensitive to training compared to objective measures.

In 22 of the 54 studies, the subjective measures were more consistent (reliable) and sensitive (to changes in training load) than their objective counterparts. Some subjective measures were better than others. For example, on the RESTQ-S questionnaire, six measures (stress, fatigue, recovery, physical recovery, general well-being and “being in shape”) were found to be more sensitive to changes in training load. As an interesting side note, it was found that when scores from multiple questions were combined into overall scores, some sensitivity was lost.

Finding #3: Some variables are better than others for acute changes

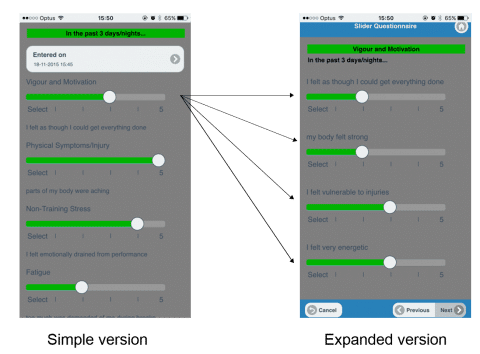

The research indicates that some variables are more sensitive and reflective of acute changes in athlete load and well-being. The following table provides a guide to what may be best to monitor regularly.

Variables that work the best:

• Vigour/motivation

• Physical symptoms/injury

• Non-training stress

• Fatigue

• Physical recovery

• General health/wellbeing

• Being in shape

Variables that may be of less benefit:

• Depression (although this variable may become more valuable if athletes are in an overtrained state)

• Confusion

• Emotional stress

• Social recovery

• Sleep quality

• Self-efficacy

Finding #3: Monitoring regularly in real time

Capturing well-being data regularly rather than retrospectively (such as once per week) is vital. When data is captured retrospectively, it is likely to be heavily biased towards the day(s) immediately preceding collection, rather than accurately reflecting variations form day to day.

The authors recommended using a simple monitoring method of core questions on a daily basis, which could then be supplemented with a more comprehensive questionnaire such as the POMS or RESTQ-S on a weekly basis.

Our 2 Cents

There seems to be a common misconception with many professionals in our industry that self-reported athlete monitoring data is of little or no value due to its subjective nature and perceived lack of reliability. This latest article should serve to dispel a lot of those opinions, and shows us that in fact subjective measures of athlete wellness are a valuable part of the framework on a regular basis to track both acute and chronic training load reactions.

One thing we have found is that most organisations vary in what they monitor, how they analyse that data, and are constantly refining their use of these self-reported measures. We have already seen however that clients such as the Australian Institute of Sport are having tremendous success in reducing injuries using simple measures such as time*RPE to monitor self-reported load. The real art is how you then analyse that data over time to identify when athletes are at risk.

Some people we have met feel that objective measures are the “only way to go”, relying solely on systems such as GPS to quantify athlete well-being and load. However we have to be careful to not only rely on these external measures of load and stress, but also how these loads actually AFFECT the individual athlete. Asking them seems to be a logical solution, and the research shows that it is a valid and reliable tool with which to monitor and protect your athletes.

In summary:

1. Self-reported subjective measures are a valid and reliable method for monitoring athletes

2. Subjective and objective measures may not always agree – don’t be concerned, they serve different purposes and are subject to timing differences

3. Consideration must be given to the value of different questions

4. Keeping it simple is vital to gaining athlete compliance

Stay tuned for more blogs in this and other related topics by joining us on our social media channels.

Full Article: Saw, A, Main, L and Gastin, P. (2015) Monitoring the athlete training response: subjective self-reported measures trump commonly used objective measures: a systematic review. British Journal of Sports Medicine, published online, September 9, 2015. http://bjsm.bmj.com/content/early/2015/09/09/bjsports-2015-094758.short?rss=1